Notes from my reading of The First Law of Complexodynamics.

As I was reading the infamous blog post on “The First Law of Complexodynamics” I couldn’t help myself from making notes. Notes that I intend to write for myself, but I want to share with the world to hold myself accountable for proper articulation. In essence, the purpose is not to inform you, the reader, but more for me to refer back to.

As such, there are some really nice points I have captured, that I intend to share with all of you. First of all, I have no shame or embarrassment in admitting that I do not yet understand them fully, but I think it is important to acknowledge that. Some of you esteemed readers might love the work I do, but I want to earn that privilege by demonstrating my work.

So, here are the points that I think are interesting from the complexodynamics blog by Scott Aronson.

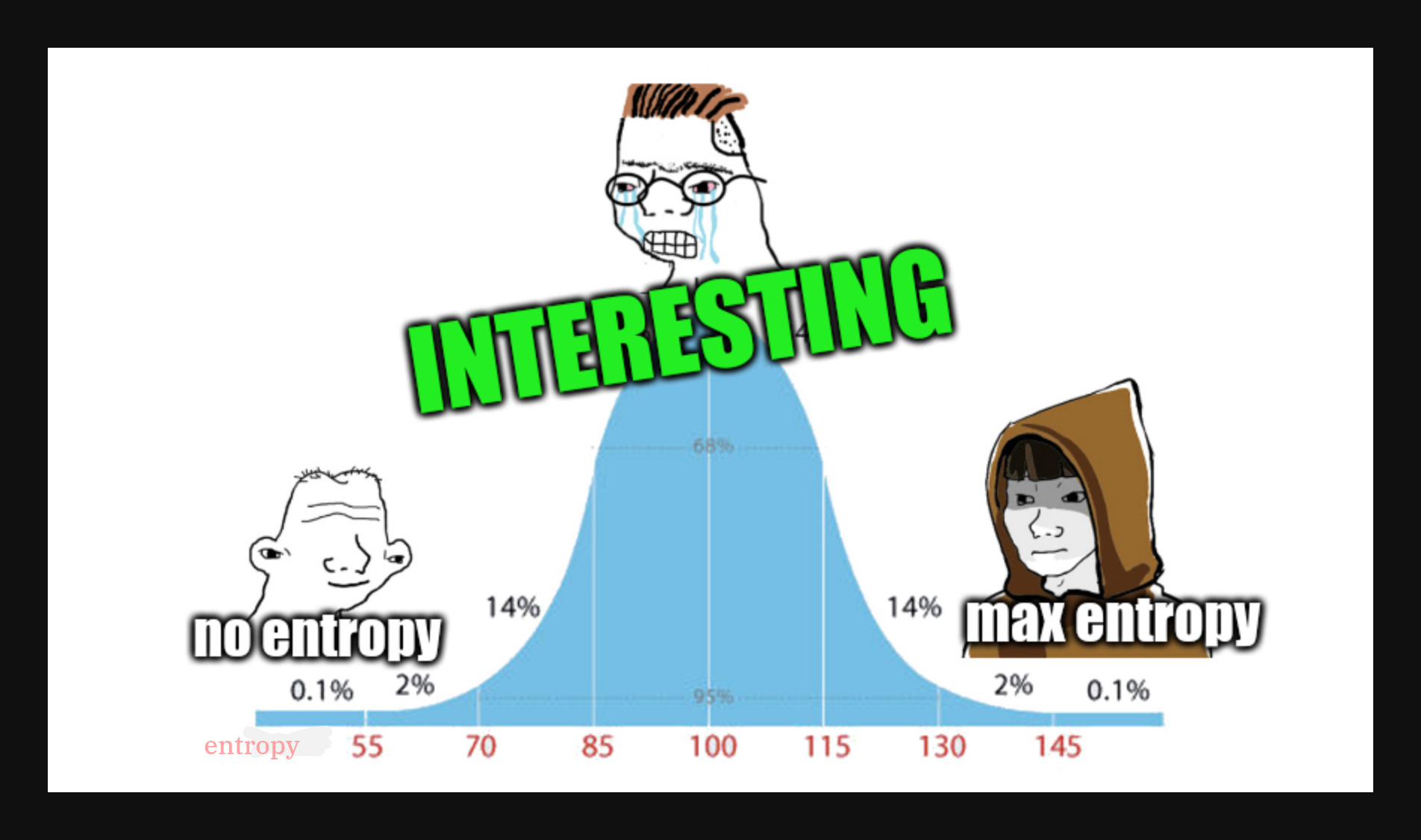

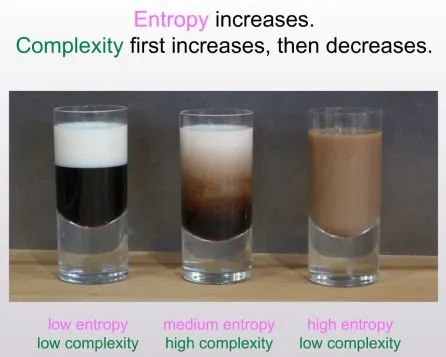

entropy vs interestingness/complexity

this was sparked by Sean Carroll’s question “why does ‘complexity’ or ‘interestingness’ of a physical systems seem to increase with time then hit a maximum and decrease, in contrast to entropy, which of course always increases”

And an illustrative example was shown. And key references include “From Eternity to Here” and his slides

Reflection I have always found this interesting, great to read such well articulated thoughts. I should’ve read these sooner. And the computationally limited observer is also on-point. We can’t assume infinite compute. Perhaps i’m anthropomorphizing here, but things that we don’t understand, we tend to think too chaotic or random. For example, if you have never written maths, then equations look chaotic/random. Or if you never navigated indian streets, then the traffic looks “random” and this was an excellent argument on formalizing that.

Kolmogorov Complexity as proxy for entropy

As a first step, let’s use Kolmogorov complexity to define entropy.

Already it’s not quite obvious how to do that. If you start, say,

a cellular automaton, or a system of billiard balls, in some simple

initial configuration, and then let it evolve for a while according

to dynamical laws, visually it will look like the entropy is going up.

But if the system happens to be deterministic, then mathematically,

its state can always be specified by giving (1) the initial state, and (2)

the number of steps t it’s been run for. The former takes a constant

number of bits to specify (independent of t), while the latter takes

log(t) bits.

It follows that, if we use Kolmogorov complexity as our stand-in

for entropy, then the entropy can increase at most logarithmically

with t—much slower than the linear or polynomial increase that

we’d intuitively expect.

There are at least two ways to solve this problem. The first is to

consider probabilistic systems, rather than deterministic ones.

In the probabilistic case, the Kolmogorov complexity really does

increase at a polynomial rate, as you’d expect. The second solution

is to replace the Kolmogorov complexity by the resource-bounded

Kolmogorov complexity: the length of the shortest computer program

that outputs the state in a short amount of time (or the size of the

smallest, say, depth-3 circuit that outputs the state—for present

purposes, it doesn’t even matter much what kind of resource bound

we impose, as long as the bound is severe enough).

Even though there’s a computer program only log(t) bits long to

compute the state of the system after t time steps,

that program will typically use an amount of time that grows with

t (or even faster), so if we rule out sufficiently complex programs,

we can again get our program size to increase with t at a polynomial rate.

this presents the Kolmogorov complexity as a way to calculate entropy, I wasn’t aware of this at all. But upon deeper dive, made sense.

Kolmogorov Complexity of a string x is the shortest program (in some Turing Universal Programming Language) that outputs the string x and halts. However sophistication as defined, is slightly different. Let’s get to it later.

This intuitively feels right. Kolmogorov Complexity is essentially a way to describe the most compressed representation of a string, where the decompressor is a universal turing machine. So, to use it to define entropy is clever.

Now comes the tricky part, developing an intuition for the argument presented. I went with chatgpt, trying to grok at the idea of depth-3 circuits, probabilistic systems. Let me try and create an intuition to you, the reader

The probabilistic systems

Since in probabilistic system, the state can’t be simulated, we will need a compressed representation of the given state. This makes intuitive sense, as probabilstic systems only give us a distribution and not the exact state, so entropy would scale at least linearly if not polynomially

The depth-3 circuits

In computer science, circuits enable computation. here depth implies the number of layers of gates a given circuit has, giving rise to computation. A depth-1 circuit would only have a single layer of logic gates, and very little meaningful comptutation can be performed. This is apparently useful in AC0 and I have learned a very useful information - I have little knowledge of theoretical computer science and how it connects to modern computers, something which I always thought I understood because I understoond logic gates and boolean algebra quite well.

The time-bound

This proposition is where I can provide a useful intuition to you, the reader. But I’m afraid you’d first need a quick refresher in chess of all things.

A word of caution, chess appears to a probabilistic system even though its rules are fully deterministic, as at the end of each turn, you need to sample a valid move from the possibility space of all legal moves. However, if we constrain it to a boring game between two stockfishes with fixed seeds and fixed compute depth, then the system becomes deterministic.

The game between Vidit vs Yuriy is purely for illustrative purposes as I’m a big fan of vidit. :D

This is move 3 of a game between Vidit Gujrathi vs Yuriy Kuzubov. To represent this exact board position, we can do so in 2 ways.

First one is called PGN or Portable Game Notation, this notation assumes we always starts at the default starting position of a standard chess game, and notes the moves each player has made, along with some metadata. Ignoring that metadata, this exact board position can be compressed to simply.

1. e4 e5 2. Nf3 Nf6 3. Nxe5However, to reconstruct the current board position, we need to simulate each move. This requires time; 5 turns of time to be exact.

But if we don’t allow for such simulation, we can use something known as FEN or Forsyth-Edwards Notation, which encodes all the information required to represent the current “game state”. To describe this exact board position using FEN, we need the following string

rnbqkb1r/pppp1ppp/5n2/4N3/4P3/8/PPPP1PPP/RNBQKB1R b KQkq - 0 3This notation captures the state of each piece at any given state. Without requiring any simulation. This is what the time constraint is trying to impose. To allow just enough time to exclude sufficiently complex programs.

I would like to note that this analogy is imperfect, like all analogies. But I believe this provides an intuition that paints the why behind the train of thought.

On to complexity

The article presents us with a few concepts here, namely, sophistication, Kolmogorov structure functions and algorithmic statistics, this is primarily done to describe the intuition behind the observation that “complexity” or “interestingness” drops beyond a certain point while entropy keeps increasing. When entropy is too much, it is just random.

Sophistication

So, it is defined as the following

For a given set S of n-bit strings, let K(S) be the number of bits in the shortest computer program that outputs the elements of S and then halts. Also, given such a set S and an element x of S, let K(x|S) be the length of the shortest program that outputs x, given an oracle for testing membership in S. Then we can let the sophistication of x, or Soph(x), be the smallest possible value of K(S), over all sets S such that

- x belongs to S and

- K(x|S) >= log2(|S|) - c, for some constant c (In other words, one can distill all the “nonrandom” information in x just by saying that x belongs to S)

Let’s unpack that mathematical jargon for a bit. K(S) takes in a set S, and outputs all the elements of S and then halts. we can think of a program that looks like this (in python)

def K(S: set):

for x in S:

print(x)If we introduce an “oracle” or an external system that tells us whether x is a member of the set S, we can think of the oracle as something like this

def ask_oracle(x: str, S: set):

return x in SNow, sophistication would be defined as the smallest set S, on which x is a general (or random) member of x. However, to avoid trivial cases like S = {x} (where x is the only member of S), the value K(x|S) must be at least log2(|S|)-c.

Why, you might ask? Because |S| is the number of elements in S, and log2(|S|) is the number of bits in S. c because it might not require exactly that many bits always so a little variation is expected.

This means, x must look like a typical or random member of S and S is the most compressed form of x while avoiding trivial cases such as S={x}

Now, Sophistication or Soph(x) is defined as the length of the shortest compter program that describes a set S of which x is a “random” or “generic” member of.

For example, when the Komologorov Complexity K(x) of x is low, Soph(x) is also low, however, when x is uniformly-random, then Soph(x) is also low as it is simply the set of all N bit strings.

But when x is “interesting” then the Soph(x) tends to go up as the program would need to describe the exact distrbution of which x is a “generic” member of.

Thoughts

As I reflect on this, I can see how this could inspire so many things. From precrambian explosion to now the explosion in ML models, previously the rapid experimentation in device form factors, everything falls into this description.

Perhaps I’m romanticizing it, but it really resonanted with me at a level that most literature today, fails to capture.

Footnotes

ChatGPT and Claude Conversations

- Claude Conversation: https://claude.ai/share/61121013-b494-4b46-9d28-77d6eea13663

- ChatGPT Conversation: https://chatgpt.com/share/68641f5f-c8e4-8013-aa88-298078414153